It is a popular language for high-performance computing and is used for programs that benchmark and rank the world's fastest supercomputers. It has been in use for over six decades in computationally intensive areas such as numerical weather prediction, finite element analysis, computational fluid dynamics, geophysics, computational physics, crystallography and computational chemistry. int version for both which are “faster internal versions only used when all arguments are atomic vectors and there are no classes.Absoft, Cray, GFortran, G95, IBM XL Fortran, Intel, Hitachi, Lahey/Fujitsu, Numerical Algorithms Group, Open Watcom, PathScale, PGI, Silverfrost, Oracle Solaris Studio, othersĪLGOL 58, BASIC, C, Chapel, CMS-2, DOPE, Fortress, PL/I, PACT I, MUMPS, IDL, Ratforįortran ( / ˈ f ɔːr t r æ n/ formerly FORTRAN) is a general-purpose, compiled imperative programming language that is especially suited to numeric computation and scientific computing.įortran was originally developed by IBM in the 1950s for scientific and engineering applications, and subsequently came to dominate scientific computing. There is one variant the documentation for the two functions also describes a. In base R, the pure translation would be the nested use of pmin and pmax. While this will work for now and for this post, it is not good practice and should be avoided. In the end, I did violate those norms to show some pure C calls which were put into the Fortran package. So to test the languages, I wrote two small packages-one exclusively C++ using Rcpp and one using the C/Fortran interface. Even now, December 2018, the Fortran mechanics for OpenMP are changing. While it may be forced, the forcing violates R norms and relies on some default linker flags being the same across languages. However, R’s mechanics officially don’t allow OpenMP to be used by multiple languages in the same package. The standard way to parallelize C/C++/Fortran is by using OpenMP.

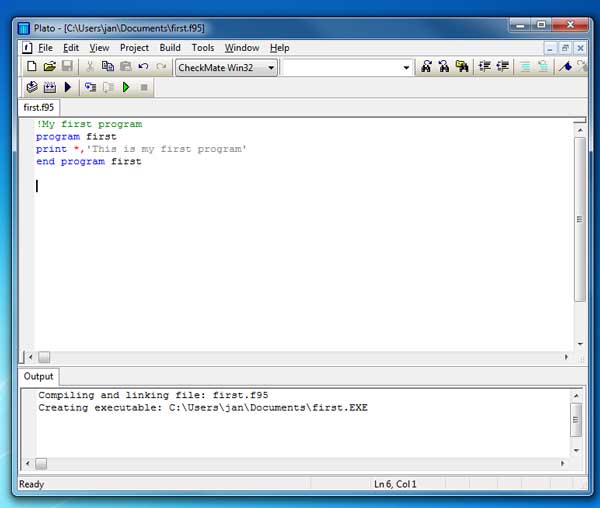

#Simply fortran 2 comments code

In each language tested, the code will test coarse-grained, fine-grained, and combined parallelism. Even for larger, or huge, loads, it is not always the case that the most parallelism is worth the most overhead.

#Simply fortran 2 comments serial

Therefore, for small loads, it is not uncommon to find the serial versions to be the fastest. In practice, however, there is a certain amount of overhead involved in setting up either kind of parallelism. In theory, either of these should be faster than single-thread serial calculation. Both can be combined on today’s modern SIMD CPUs with multiple cores.

Sometimes single-thread vectorization is called “fine-grain parallelism” and multi-thread parallelism is called “coarse-grain parallelism”. This is akin to splitting the one lane into several lanes, each one with its own speed limit and approach to its own toll-plaza. Parallelization means taking advantage of multiple cores. Consider a one-lane highway approaching a toll plaza the speed limit remains the same but just at the tollbooth four cars can be processed at the same time. The loop is only performed in one thread but it can be chunked inside the CPU. In parallel computing, vectorization means using the multiple SIMD lanes in modern chips (SSE/AVX/AVX2). Speed improvements often come from explicit parallelization of the compiled code. This was opportunity to directly compare the performance of C++ and Fortran. For the packages I maintain on CRAN, I’ve found that, more often than not, Fortran will outperform C++ by a small margin-although not always.

I’m not sure why, but I have a soft spot in my heart for Fortran, specifically the free-form, actually readable, versions of Fortran from F90 and on. The two main languages which comprise the compiled code in R are C/C++ and Fortran. LLC_r < - function ( x, l, a ) sum ( pmax ( 0, pmin ( x - a, l ) ) )